Recurrent Neural Networks (RNNs) are a type of Artificial Intelligence primarily used in the field of deep learning. Unlike traditional neural networks, RNNs have a memory that captures information about what has been calculated so far. In other words, they use their understanding from previous inputs to influence the output they will produce.

RNNs are called “recurrent” because they perform the same task for every element in a sequence, with the output being dependent on the previous computations. RNNs are still used to power smart technologies like Apple‘s Siri and Google Translate.

However, with the advent of transformers like ChatGPT, the landscape of natural language processing (NLP) has shifted. While transformers revolutionized NLP tasks, their memory and computational complexity scaled quadratically with sequence length, demanding more resources.

Enter RWKV

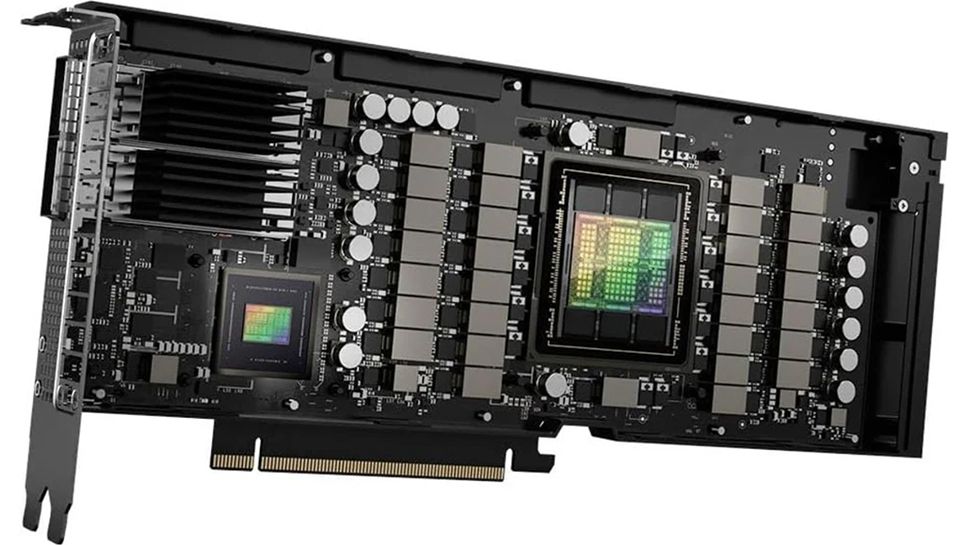

Now, a new open source project, RWKV, is offering promising solutions to the GPU power conundrum. The project, backed by the Linux Foundation, aims to drastically reduce the compute requirement for GPT-level language learning models (LLMs), potentially by up to 100x.

RNNs exhibit linear scaling in memory and computational requirements, but struggle to match the performance of transformers due to their limitations in parallelization and scalability. This is where RWKV comes into play.

RWKV, or Receptance Weighted Key Value, is a novel model architecture that combines the parallelizable training efficiency of transformers with the efficient inference of RNNs. The result? A model that requires significantly fewer resources (VRAM, CPU, GPU, etc) for running and training, while maintaining high-quality performance. It also scales linearly to any context length and is generally better trained in languages other than English.

Despite these promising features, the RWKV model is not without its challenges. It is sensitive to prompt formatting and weaker at tasks requiring look-back. However, these issues are being addressed, and the model’s potential benefits far outweigh the current limitations.

The implications of the RWKV project are profound. Instead of needing 100 GPUs to train a LLM model, a RWKV model could deliver similar results with fewer than 10 GPUs. This not only makes the technology more accessible but also opens up possibilities for further advancements.