For all the benefits of the best AI image generators, many of us are worried about a torrent of misinformation and fakery. Meta, it seems, didn’t get the memo – in a Threads post, it’s just recommended that those of us who missed the recent return of the Northern Lights should just fake shots using Meta AI instead.

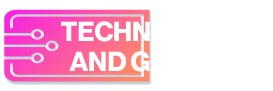

The Threads post, spotted by The Verge, is titled “POV: you missed the northern lights IRL, so you made your own with Meta AI” and includes AI-generated images of the phenomena over landmarks like the Golden Gate Bridge and Las Vegas.

Meta has received a justifiable roasting for its tone-deaf post in the Threads comments. “Please sell Instagram to someone who cares about photography” noted one response, while NASA software engineer Kevin M. Gill remarked that fake images like Meta’s “make our cultural intelligence worse”.

It’s possible that Meta’s Threads post was just an errant social media post rather than a reflection of the company’s broader view on how Meta AI’s image generator should be used. And it could be argued that there’s little wrong with generating images like Meta’s examples, as long as creators are clear about their origin.

The problem is that the tone of Meta’s post suggests people should use AI to mislead their followers into thinking that they’d photographed a real event – and for many, that’s crossing a line that could have more serious repercussions for news events that are more consequential than the Northern Lights.

But where is the line?

Is posting AI-generated photos of the Northern Lights any worse than using Photoshop’s Sky Replacement tool (above)? Or editing your photos with Adobe’s Generative Fill? These are the kinds of questions that generative AI tools are raising on a daily basis – and this Meta misstep is an example of how thin the line can be.

Many would argue that it ultimately comes down to transparency. The issue with Meta’s post (which is still live) isn’t the AI-generated Northern Lights images, but the suggestion that you could use them to simply fake witnessing a real news event.

Transparency and honesty around an image’s origins are as much the responsibility of the tech companies as it is their users. That’s why Google Photos is, according to Android Authority, testing new metadata that’ll tell you whether or not an image is AI-generated.

Adobe has also made similar efforts with its Content Authenticity Initiative (CAI), which has been attempting to fight visual misinformation with its own metadata standard. Google recently announced that it will finally be using the CAI’s guidelines to label AI images in Google Search results. But the sluggishness in adopting a standard leaves us in a limbo situation as AI image generators become ever-more powerful.

Let’s hope the situation improves soon – in the meantime, it seems incumbent on social media users to be honest when posting fully AI-generated images. And certainly for tech giants to not encourage them to do the opposite.