In a relatively short time, artificial intelligence (AI) has integrated into our everyday lives. Now, almost half (45%) of the US population are using generative AI-tools as millions across the world use services such as ChatGPT to draft emails or Midjourney to generate new visuals. AI is propelling the arrival of a new digital era, enhancing our speed and efficiency in tackling creative or professional challenges, all while helping to drive new innovations.

The use of AI does not stop there. It has become a vital component of essential services, ensuring the smooth operation of our society – from loan approvals and higher education admissions, to access to mobility platforms, and soon, access to medical care. Online identity verification, has evolved from opening a bank account to encompassing a wide range of uses on the internet.

Nonetheless, AI systems have the ability to behave in a biased manner towards end-users. In recent months, Uber Eats and Google have discovered how much the use of AI can threaten the legitimacy and reputation of their online services. However, humans are also vulnerable to biases. These can be systemic, as shown by the bias in facial recognition – the tendency to better recognize members of one’s own ethnic group (OGB, or Own Group Bias) – a phenomenon now well documented.

The challenge lies here. Online services have become the backbone of the economy with 81% of people saying they access services online daily. With lower costs and faster execution, AI is an attractive choice for businesses managing large customer volumes. However, despite all the advantages, it is crucial to recognize the biases it presents and the responsibility of companies to implement safeguards to protect their reputation and the wider economy.

A bias prevention strategy must focus on four key pillars – identifying and measuring bias, awareness of hidden variables and hasty conclusions, designing rigorous training methods, and adapting the solution to the use case.

VP of Applied AI, Onfido.

Pillar 1: Detecting and assessing bias

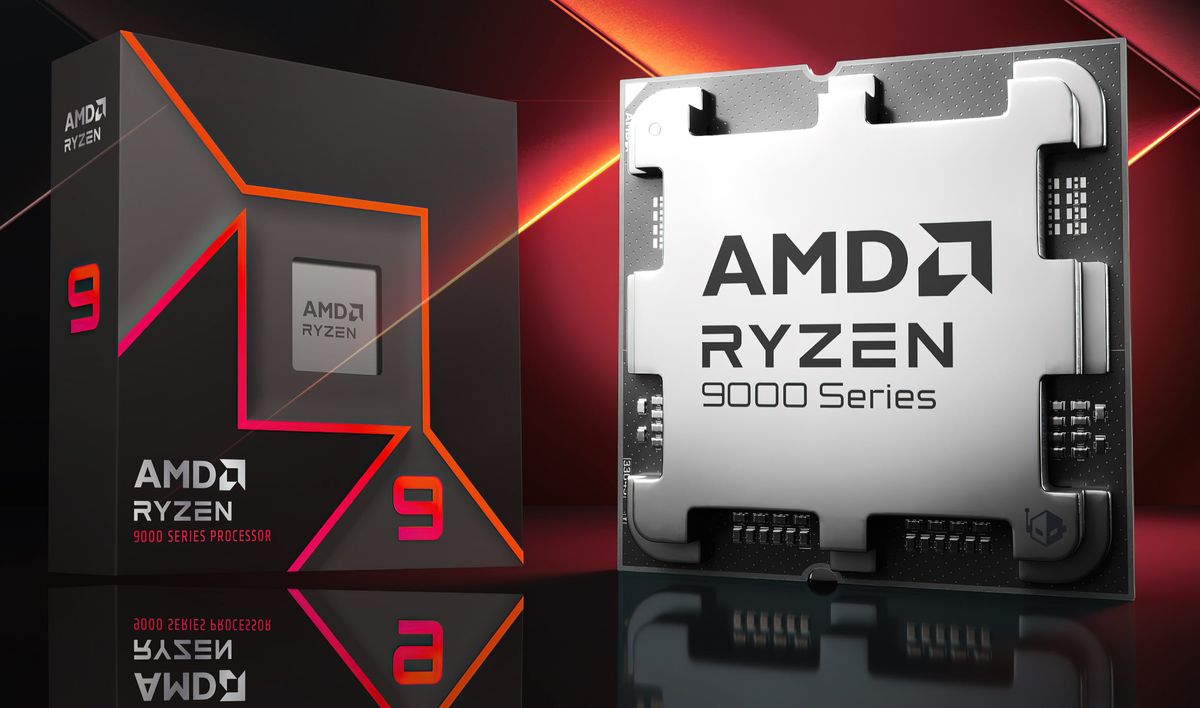

The battle against bias starts by implementing robust processes for its measurement. AI biases frequently lurk within extensive datasets, becoming apparent only after untangling several correlated variables.

It is therefore crucial for companies using AI to establish good practices such as measurement by confidence interval, the use of datasets of appropriate size and variety, and the employment of appropriate statistical tools manipulated by competent persons.

These companies must also strive to be as transparent as possible about these biases, for example by publishing public reports such as the “Bias Whitepaper” that Onfido published in 2022. These reports should be based on real production data and not on synthetic or test data.

Public benchmarking tools such as the NIST FRVT (Face Recognition Vendor Test) also produce bias analyses that can be exploited by these companies to communicate about their bias and reduce this bias in their systems.

Based on these observations, companies can understand where biases are most likely to occur in the customer journey and work to find a solution – often by training the algorithms with more complete datasets to produce fairer results. This lays the foundation for rigorous bias treatment and increases the value of the algorithm and its user journey.

Pillar 2: Watch out for concealed variables and hasty conclusions

The bias of an AI system is often hidden in multiple correlated variables. Let’s take the example of facial recognition between biometrics and identity documents (“face matching”). This step is key in the user’s identity verification.

A first analysis shows that the performance of this recognition is less good for people with dark skin colour than for an average person. It is tempting in these conditions to conclude that by design, the system penalizes people with dark skin.

However, by pushing the analysis further, we observe that the proportion of people with dark skin is higher in African countries than in the rest of the world. Moreover, these African countries use, on average, identity documents of lower quality than those observed in the rest of the world.

This decrease in document quality explains most of the relative poor performance of facial recognition. Indeed, if we measure the performance of facial recognition for people with dark skin, restricting ourselves to European countries that use higher-quality documents, we find that the bias practically disappears.

In statistical language, we say that the variables “document quality” and “country of origin” are confounding with respect to the variable “skin colour.”

We provide this example not to convince that algorithms are not biased (they are) but to emphasize that bias measurement is complex and prone to hasty but incorrect conclusions.

It is therefore crucial to conduct a comprehensive bias analysis and to study all the hidden variables that may influence the bias.

Pillar 3: Develop rigorous training methodologies

The training phase of an AI model offers the best opportunity to reduce its biases. It is indeed difficult to compensate for this bias afterward without resorting to ad-hoc methods that are not robust.

The datasets used for learning are the main lever that allows us to influence learning. By correcting the imbalances in the datasets, we can significantly influence the behavior of the model.

Let’s take an example. Some online services may be used more frequently by a person of a given gender. If we train a model on a uniform sample of the production data, this model will probably behave more robustly on the majority gender, to the detriment of the minority gender, which will see the model behave more randomly.

We can correct this bias by sampling the data of each gender equally. This will probably result in a relative reduction in performance for the majority gender, but to the benefit of the minority gender. For a critical service (such as an application acceptance service for higher education), this balancing of the data makes perfect sense and is easy to implement.

Online identity verification is often associated with critical services. This verification, which often involves biometrics, requires the design of robust training methods that reduce biases as much as possible on the variables exposed to biometrics, namely: age, gender, ethnicity, and country of origin.

Finally, collaboration with regulators, such as the Information Commissioner’s Office (ICO), allows us to step back and think strategically about reducing biases in models. In 2019, Onfido worked with the ICO to reduce biases in its facial recognition software, which led Onfido to drastically reduce the performance gaps between age and geographic groups of its biometric system.

Pillar 4: Tailor the solution to the specific use case

There is no single measure of bias. In its glossary on model fairness, Google identifies at least three different definitions for fairness, each of which is valid in its own way but leads to very different model behaviors.

How, for example, to choose between “forced” demographic parity and equal opportunity, which takes into account the variables specific to each group?

There is no single answer to this question. Each use case requires its own reflection on the field of application. In the case of identity verification, for example, Onfido uses the “normalized rejection rate” which involves measuring the rejection rate by the system for each group and comparing it to the overall population. A rate greater than 1 corresponds to an over-rejection of the group, while a rate less than 1 corresponds to an under-rejection of the group.

In an ideal world, this normalized rejection rate would be 1 for all groups. In practice, this is not the case for at least two reasons: first, because the datasets necessary to achieve this objective are not necessarily available; and second, because certain confounding variables are not within our control (this is the case, for example, with the quality of identity documents mentioned in the example above).

Striving for perfection hinders progress

It is not possible to completely eliminate bias. Therefore, it is vital to measure the bias, continuously reduce it, and openly communicate about the system’s limitations.

Research on bias is widely accessible with numerous publications on the topic available. Major companies such as Google and Meta continue to contribute to this knowledge by publishing in-depth technical articles, but also accessible articles and training materials, as well as dedicated datasets for bias analysis. For instance, last year Meta’s release of the Conversational Dataset, focused on bias analysis in models.

As AI developers continue to innovate and applications evolve, biases will surface. Nevertheless, this should not discourage organizations from implementing these advanced technologies, as they hold the potential to greatly enhance digital services.

By implementing effective measures to mitigate bias, companies can ensure ongoing improvements in customers’ digital experiences. Customers will benefit from access to the right services, the ability to adapt to new technologies, and receive the necessary support from the companies they interact with.

We feature the best customer feedback tools.

This article was produced as part of TechRadarPro’s Expert Insights channel where we feature the best and brightest minds in the technology industry today. The views expressed here are those of the author and are not necessarily those of TechRadarPro or Future plc. If you are interested in contributing find out more here: https://www.techradar.com/news/submit-your-story-to-techradar-pro