OpenAI just held its eagerly-anticipated spring update event, making a series of exciting announcements and demonstrating the eye- and ear-popping capabilities of its newest GPT AI models. There were changes to model availability for all users, and at the center of the hype and attention: GPT-4o.

Coming just 24 hours before Google I/O, the launch puts Google‘s Gemini in a new perspective. If GPT-4o is as impressive as it looked, Google and its anticipated Gemini update better be mind-blowing.

What’s all the fuss about? Let’s dig into all the details of what OpenAI announced.

1. The announcement and demonstration of GPT-4o, and that it will be available to all users for free

The biggest announcement of the stream was the unveiling of GPT-4o (the ‘o’ standing for ‘omni’), which combines audio, visual, and text processing in real time. Eventually, this version of OpenAI’s GPT technology will be made available to all users for free, with usage limits.

For now, though, it’s being rolled out to ChatGPT Plus users, who will get up to five times the messaging limits of free users. Team and Enterprise users will also get higher limits and access to it sooner.

GPT-4o will have GPT-4’s intelligence, but it’ll be faster and more responsive in daily use. Plus, you’ll be able to provide it with or ask it to generate any combination of text, image, and audio.

The stream saw Mira Murati, Chief Technology Officer at OpenAI, and two researchers, Mark Chen and Barret Zoph, demonstrate GPT-4o’s real-time responsiveness in conversation while using its voice functionality.

The demo began with a conversation about Chan’s mental state, with GPT-4o listening and responding to his breathing. It then told a bedtime story to Barret with increasing levels of dramatics in its voice upon request – it was even asked to talk like a robot.

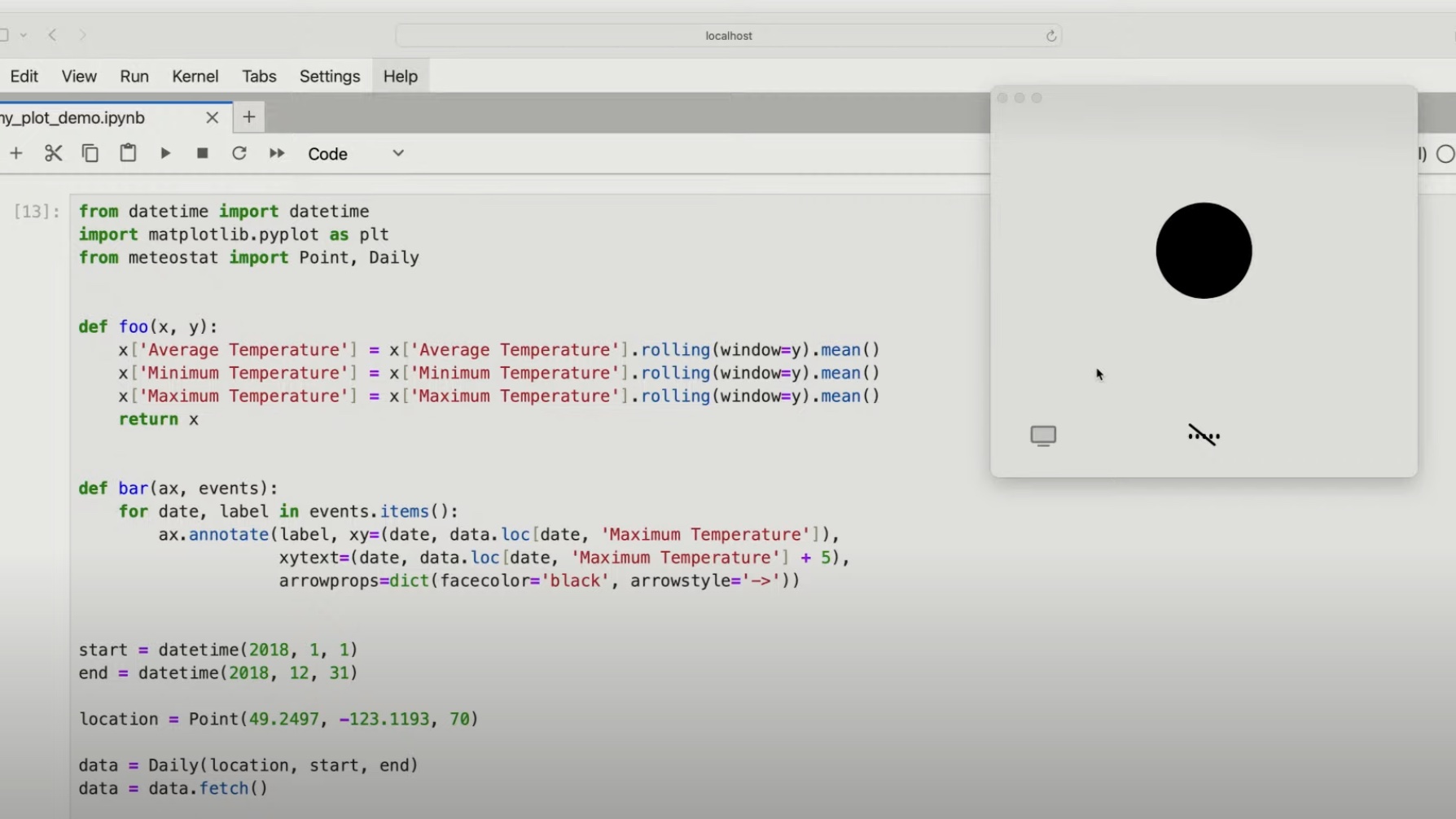

It continued with a demonstration of Barret “showing” GPT-4o a mathematical problem and the model guiding Barret through solving it by providing hints and encouragement. Chan asked why this specific mathematical concept was useful, which it answered at length.

They followed this up by showing GPT-4o some code, which it explained in plain English, and provided feedback on the plot that the code generated. The model talked about notable events, the labels of the axis, and a range of inputs. This was to show OpenAI’s continued conviction to improving GPT models’ interaction with code bases and the improvement of its mathematical abilities.

The penultimate demonstration was an impressive display of GPT-4o’s linguistic abilities, as it simultaneously translated two languages – English and Italian – out loud.

Lastly, OpenAI provided a brief demo of GPT-4o’s ability to identify emotions from a selfie sent by Barret, noting that he looked happy and cheerful.

If the AI model works as demonstrated, you’ll be able to speak to it more naturally than many existing generative AI voice models and other digital assistants. You’ll be able to interrupt it instead of having a turn-based conversation, and it’ll continue to process and respond – similar to how we speak to each other naturally. Also, the lag between query and response, previously about two to three seconds, has been dramatically reduced.

ChatGPT equipped with GPT-4o will roll out over the coming weeks, free to try. This comes a few weeks after Open AI made ChatGPT available to try without signing up for an account.

2. Free users will have access to the GPT store, the memory function, the browse function, and advanced data analysis

GPTs are custom chatbots created by OpenAI and ChatGPT Plus users to help enable more specific conversations and tasks. Now, many more users can access them in the GPT Store.

Additionally, free users will be able to use ChatGPT’s memory functionality, which makes it a more useful and helpful tool by giving it a sense of continuity. Also being added to the no-cost plan are ChatGPT’s vision capabilities, which let you converse with the bot about uploaded items like images and documents. The browse function allows you to search through previous conversations more easily.

ChatGPT’s abilities have improved in quality and speed in 50 languages, supporting OpenAI’s aim to bring its powers to as many people as possible.

3. GPT-4o will be available in API for developers

OpenAI’s latest model will be available for developers to incorporate into their AI apps as a text and vision model. The support for GPT-4o’s video and audio abilities will be launched soon and offered to a small group of trusted partners in the API.

4. The new ChatGPT desktop app

OpenAI is releasing a desktop app for macOS to advance its mission to make its products as easy and frictionless as possible, wherever you are and whichever model you’re using, including the new GPT-4o. You’ll be able to assign keyboard shortcuts to do processes even more quickly.

According to OpenAI, the desktop app is available to ChatGPT Plus users now and will be available to more users in the coming weeks. It sports a similar design to the updated interface in the mobile app as well.

5. A refreshed ChatGPT user interface

ChatGPT is getting a more natural and intuitive user interface, refreshed to make interaction with the model easier and less jarring. OpenAI wants to get to the point where people barely focus on the AI and for you to feel like ChatGPT is friendlier. This means a new home screen, message layout, and other changes.

6. OpenAI’s not done yet

The mission is bold, with OpenAI looking to demystify technology while creating some of the most complex technology that most people can access. Murati wrapped up by stating that we will soon be updated on what OpenAI is preparing to show us next and thanking Nvidia for providing the most advanced GPUs to make the demonstration possible.

OpenAI is determined to shape our interaction with devices, closely studying how humans interact with each other and trying to apply its learnings to its products. The latency of processing all of the different nuances of interaction is part of what dictates how we behave with products like ChatGPT, and OpenAI has been working hard to reduce this. As Murati puts it, its capabilities will continue to evolve, and it’ll get even better at helping you with exactly what you’re doing or asking about at exactly the right moment.