Was I imagining things or did it look like the iPhone 15 Pro screen was distorting every time someone summoned the new Siri? Nope. It’s a clever bit of screen animation that’s part of Apple‘s upcoming iOS 18 Apple Intelligence integration, which spans everything from the Notes and Messaging apps to Siri and powerful new Photos tools.

Apple unveiled its new suite of Apple Intelligence capabilities on Monday at WWDC 2024 during a breakneck keynote where it was hard to keep track of all the new features, artificial intelligence, platforms, and app updates.

Now, however, I’ve seen some of these new features up close and noticed some surprises, interesting choices, and one or two limitations that might frustrate consumers. Granted, Apple’s work on Apple Intelligence is just beginning, and what I’ve seen will likely look somewhat different when it arrives on iOS 18, iPadOS 18, and macOS Sequoia. The public betas aren’t even expected until next month.

New Siri does it different

But back to that new Siri interaction. The update arrives with iOS 18, but only those with iPhones A17 Pro inside (specifically the iPhone 15 Pro and Pro Max) and all M-class Macs and iPads will be able to experience it. Now that I’ve seen it in action up close, that seems like a real shame. As Apple’s Craig Federighi explained, older Apple mobile CPUs just don’t have enough power.

Nothing changes about how you activate Siri. You can call it by its name (with or without “Hey”) or press the power/sleep button. That move often looks like you’re squeezing the phone, and the new Siri gets that. A press of the button and the screen’s black bezel distorts. It flexes in, so it looks like you are really squishing the whole phone. At the same time, the bezel lights up with iridescent colors that are not randomly pulsing. Instead, the glow responds to your voice. Those animations, however, will not appear on iPhones that do not support Apple Intelligence.

Apple intelligence lets new Siri carry on a conversation that at least provides some contextual runway. Ask how your favorite team is doing, and it can speak (in the demo I saw, it was not speaking). It can also show you, for example, the Mets’ current standings in the MLB (not good). The follow-up query might just be asking about the next game without mentioning the team or that you want to know anything about the Mets. If there’s a game you want to attend, you could ask Siri to add it to your calendar. Again, no mention of the Mets or their schedule. I’d call this task-based content. It’s still unclear if Siri can carry on a conversation in the vein of OpenAI‘s GPT 4o, though.

The new Siri can be sneaky when you want it to be, accepting text input (Type to Siri) that you summon with a new double-tap gesture at the bottom of the screen. I’m not certain if Apple is going overboard with all these glowing boxes and borders, but yes, the type to Siri box glows. It’s also accessible from virtually anywhere on the iPhone, including in apps.

Photos

Apple is undeniably playing catchup in the generative image space, especially in photo editing where it finally applies the powerful lift-subject features introduced two iOS generations ago and enhances them with Apple Intelligence to remove background distractions and then fill in the blanks. Like other Apple Intelligence features, Photo Cleanup will only extend to iPhones running the A17 Pro chip. Still, it is an impressive bit of AI programming.

Cleanup will live inside Photos under Edit. Apple chose what appears to be an eraser icon to represent the feature. Yes, it’s sort of a magic eraser. When you select it, a message tells you to “Tap, brush, or circle what you want to remove.”

In practice, the feature appears smart, easy to use, and quite powerful. I saw how you could circle an unwanted person in the background of the photo; there’s no need to carefully circle only the distraction and not the subject. Apple Intelligence purposely won’t let you erase subjects. As soon as a distraction is circled and identified, you hit check, and it disappears. When Cleanup vaporized a couple from a pleasant landscape, it was cool and a little disturbing.

Just so no one gets confused about pure photography versus Apple Intelligence-assisted content, Apple does add a note to the meta information, “Apple Photos Cleanup.”

Instead of Apple auto-generating Memory movies, Apple Intelligence lets you write a prompt describing a set of, say trips with a special person. You can even tell the system to include a kind of photo like a landscape or selfie.

When Apple introduced this feature on stage, I noticed all the cool animations I assumed were stage-crafts. I was wrong, watching Create a Memory Movie” is in itself a visual treat, full of glowing photo squares with pictures lying in and out and text below that’s basically showing Apple Intelligence’s work.

The only bad news is that if you don’t like what Create a Memory Movie made, you can’t tell the system to alter the movie in a prompt. You have to start over.

Writing

Apple Intelligence and its various local generative models are almost as pervasive as the platforms. AI tools for improving and assistant writing can be found across macOS Sequoia apps like Mail and Notes.

I was somewhat surprised to see how it works. If you type something in Notes or Mail, you have to select some portion of the text to enable it. From there, you get a small app-color-coded icon where you can access proofreading and rewrite tools.

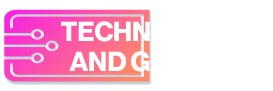

Apple Intelligence offers pop-ups to show its work and explain its choices. It appears just as adept at guiding your wiring to make it more professional or conversational as it is at pulling together short or bulleted summaries of any selected text.

Phone a ChatGPT friend

Apple Intelligence works locally and with the Private Compute Cloud. In most instances, Apple will not say when or if it’s using its cloud. This is because Apple considers its cloud just as private and secure as on-device AI. It will only tap into that cloud when the query overmatches the local system.

When Apple Intelligent decides that a third-party model might be better suited to handle your query, things are a bit different.

When it’s time to use OpenAI’s ChatGPT to figure out what you can cook with those fava beans you just photographed, Apple Intelligence will ask permission to send the prompt to ChatGPT. At least you don’t have to sign into ChatGPT and, better yet, you get to use the latest GPT-4o model.

The (magic) Image Wand

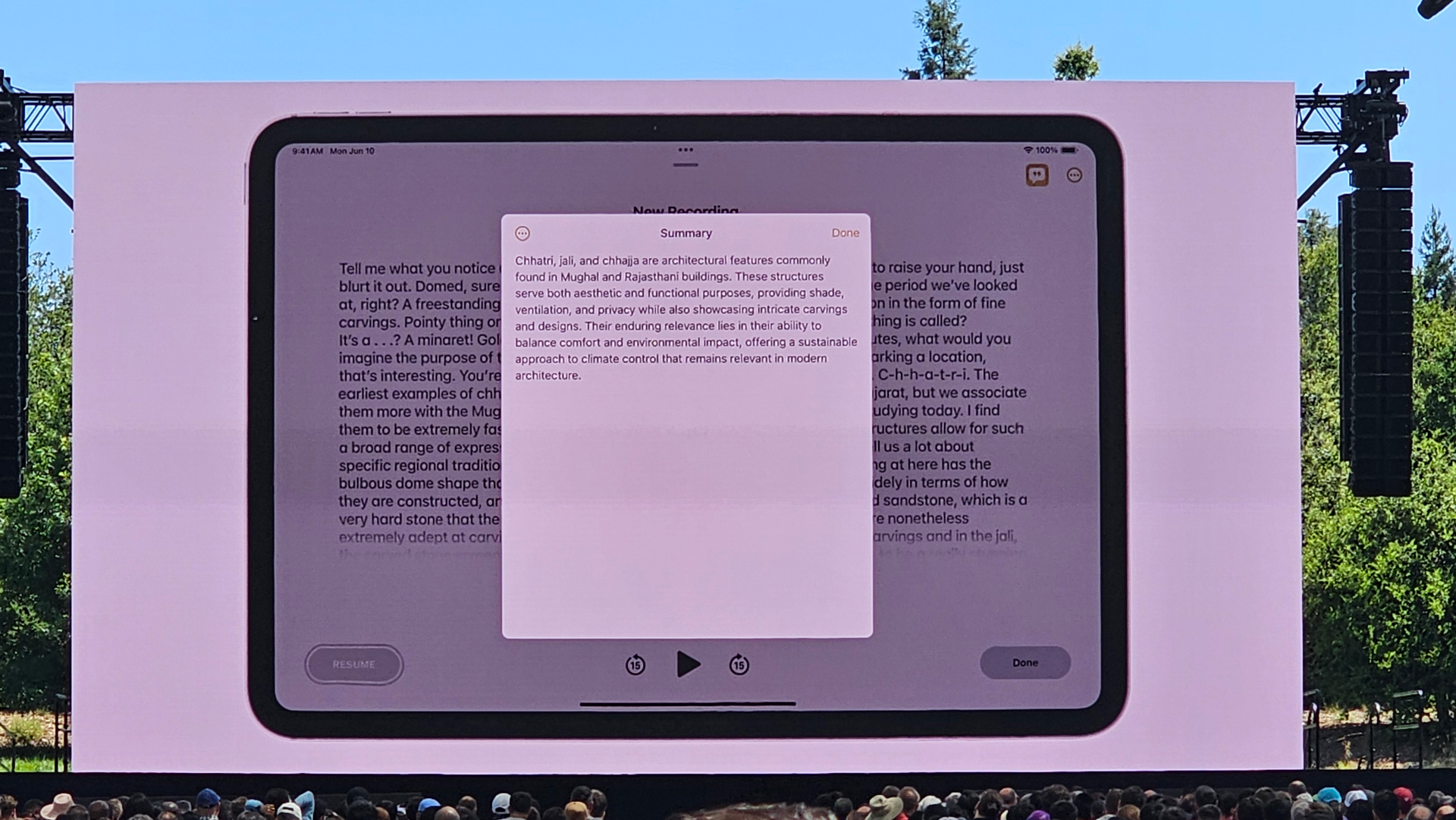

One of the coolest features I saw is the Image Wand, a tool that works especially well on an iPad with the Apple Pencil.

Apple didn’t call it a “magic wand,” but what it can conjure is sort of magical.

In Notes, you can circle any content, say a poorly done sketch of a dog and some words describing the dog or what you might want it doing (“playing with a ball”). Apple Intelligence works to generate a professional-looking image that combines both. So, the doodle and words become an illustration of a dog playing with a ball.

More impressively, you can circle the empty space next to your notes, and the system will input an image that works with the information. It’s also quite fast.

New reactions

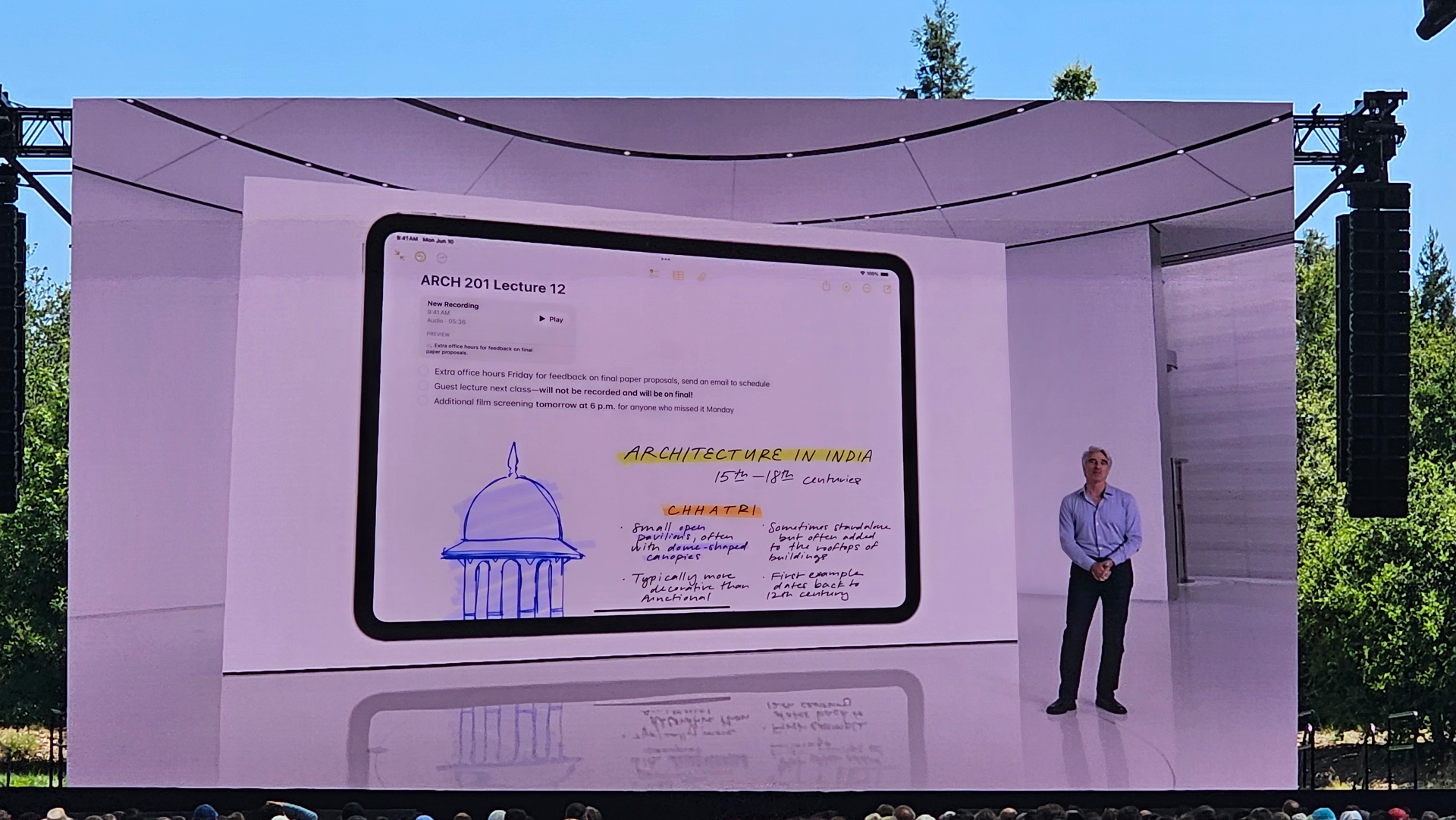

I also got a look at how the new Gemoji”s work. There are only so many official emojis you can have in messaging, but Genmoji casts aside those limits and replaces them with your imagination.

Genmoji lives in iMessage and you just tap an icon, enter your prompt, and it creates a cute and message-shareable image. I did learn that if you try to send one of these Genmojis to someone not running the latest iOS, they might see that someone reacted to your message but also get the new Genmoji delivered as a separate image message.

Naturally, this is all just scratching the surface of what will be possible with Apple Intelligence. It’s expected to permeate iOS, macOS and iPadOS, reaching into your data, apps, home screens, and more. It may also change your Apple ecosystem experience like nothing before.